- Home

- Services

- About

- News

- Contact

- Kedarnath movie free online watch

- Celesticomp v celestial navigation calculator

- Leap office software free download

- Swam engine keygen

- Autocad 2019 download free trial

- Prime directive rpg 5e

- Critical ops credit hack

- Board view

- Pandian stores mullai in saree

- How rocket league fan rewards work

- Drunken dnd name generator

- Gulim font outlook

- Farming simulator 2014 rus

- Beas radha soami shabad

- Book club questions the silent patient

- Resident evil revelations trainer cheathappens piratebay

- Does unlockbase work with phones still on contract

- Do i need adobe acrobat 7-0 professional

- Millport houses

- After effects apprentice meyer 4th edition pdf

- Trek oclv carbon 120

- Free beginner excel courses online

- Install windirstat

- Cheat codes to need for speed most wanted ps2

- Wrestling spirit 3 movesets

- Play shopping cart hero 5

- How to download garageband 10-0-3 to 10-11-6 download

The easiest way to do this is to assume that all objects in space are at the same distance, on the inside of an imaginary celestial sphere. It would be seen as a large very bright bluish scorching ball of 35° apparent diameter.Although celestial objects (the sun, the moon, planets, stars, etc) are at a wide range of distances from us, to locate them in space, we only need to know their direction. Table of notable celestial objects Apparent visual magnitudes of known celestial objects App.

M x − m x, 0 = − 2.5 log 10 ( F x F x, 0 ) įor planets and other Solar System bodies the apparent magnitude is derived from its phase curve and the distances to the Sun and observer. The apparent magnitude, m, in the band, x, can be defined as, The absolute magnitude of the Sun is 4.83 in the V band (yellow) and 5.48 in the B band (blue). The absolute magnitude, M, of a celestial body (outside the Solar System) is the apparent magnitude it would have if it were at 10 parsecs (~32.6 light years) and that of a planet (or other Solar System body) is the apparent magnitude it would have if it were 1 astronomical unit from both the Sun and Earth. Brightness varies inversely with the square of the distance. Note that brightness varies with distance an extremely bright object may appear quite dim, if it is far away. The dimmer an object appears, the higher the numerical value given to its apparent magnitude. This nebula has an apparent magnitude of 8.Īs the amount of light received actually depends on the thickness of the Earth's atmosphere in the line of sight to the object, the apparent magnitudes are adjusted to the value they would have in the absence of the atmosphere. The Hubble Space Telescope has located stars with magnitudes of 30 at visible wavelengths and the Keck telescopes have located similarly faint stars in the infrared.ģ0 Doradus image taken by ESO's VISTA.

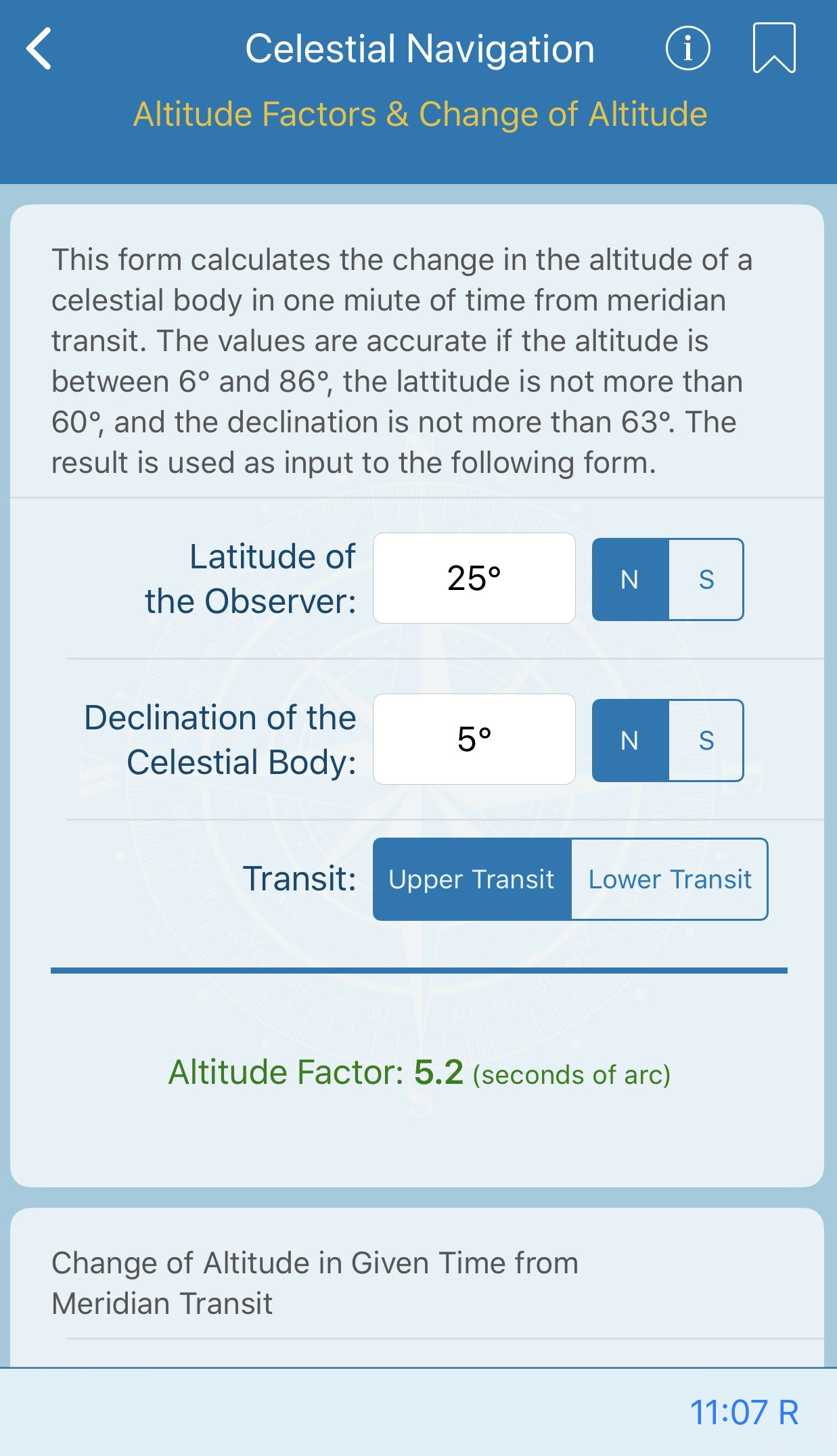

#Celesticomp v celestial navigation calculator full

The full Moon has a mean apparent magnitude of –12.74 and the Sun has an apparent magnitude of –26.74. The modern scale includes the Moon and the Sun. For example, Sirius, the brightest star of the celestial sphere, has an apparent magnitude of –1.4. Very bright objects have negative magnitudes. The modern system is no longer limited to 6 magnitudes or only to visible light. The magnitude depends on the wavelength band (see below). Astronomers later discovered that Polaris is slightly variable, so they first switched to Vega as the standard reference star, and then switched to using tabulated zero points Template:Clarify for the measured fluxes. Pogson's scale was originally fixed by assigning Polaris a magnitude of 2. The fifth root of 100 is known as Pogson's Ratio. In 1856, Norman Robert Pogson formalized the system by defining a typical first magnitude star as a star that is 100 times as bright as a typical sixth magnitude star thus, a first magnitude star is about 2.512 times as bright as a second magnitude star. This original system did not measure the magnitude of the Sun. This somewhat crude method of indicating the brightness of stars was popularized by Ptolemy in his Almagest, and is generally believed to originate with Hipparchus. Each grade of magnitude was considered twice the brightness of the following grade (a logarithmic scale). The brightest stars in the night sky were said to be of first magnitude ( m = 1), whereas the faintest were of sixth magnitude ( m = 6), the limit of human visual perception (without the aid of a telescope).

The scale used to indicate magnitude originates in the Hellenistic practice of dividing stars visible to the naked eye into six magnitudes.

- Home

- Services

- About

- News

- Contact

- Kedarnath movie free online watch

- Celesticomp v celestial navigation calculator

- Leap office software free download

- Swam engine keygen

- Autocad 2019 download free trial

- Prime directive rpg 5e

- Critical ops credit hack

- Board view

- Pandian stores mullai in saree

- How rocket league fan rewards work

- Drunken dnd name generator

- Gulim font outlook

- Farming simulator 2014 rus

- Beas radha soami shabad

- Book club questions the silent patient

- Resident evil revelations trainer cheathappens piratebay

- Does unlockbase work with phones still on contract

- Do i need adobe acrobat 7-0 professional

- Millport houses

- After effects apprentice meyer 4th edition pdf

- Trek oclv carbon 120

- Free beginner excel courses online

- Install windirstat

- Cheat codes to need for speed most wanted ps2

- Wrestling spirit 3 movesets

- Play shopping cart hero 5

- How to download garageband 10-0-3 to 10-11-6 download